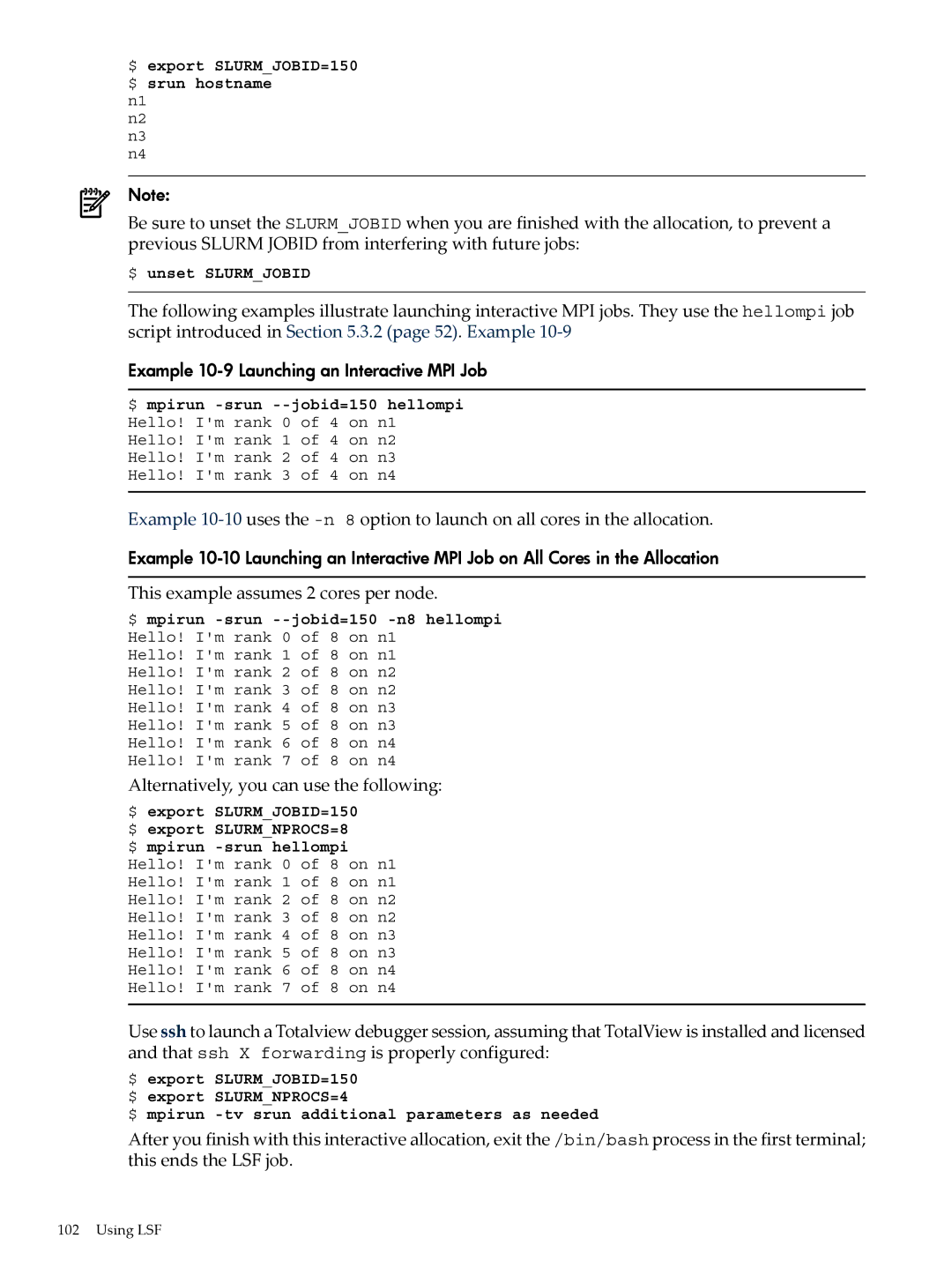

$ export SLURM_JOBID=150 $ srun hostname

n1

n2

n3

n4

Note:

Be sure to unset the SLURM_JOBID when you are finished with the allocation, to prevent a previous SLURM JOBID from interfering with future jobs:

$ unset SLURM_JOBID

The following examples illustrate launching interactive MPI jobs. They use the hellompi job script introduced in Section 5.3.2 (page 52). Example

Example 10-9 Launching an Interactive MPI Job

$ mpirun

Example

Example

This example assumes 2 cores per node.

$ mpirun

Hello! I'm rank 1 of 8 on n1

Hello! I'm rank 2 of 8 on n2

Hello! I'm rank 3 of 8 on n2

Hello! I'm rank 4 of 8 on n3

Hello! I'm rank 5 of 8 on n3

Hello! I'm rank 6 of 8 on n4

Hello! I'm rank 7 of 8 on n4

Alternatively, you can use the following:

$ export SLURM_JOBID=150 $ export SLURM_NPROCS=8 $ mpirun

Use ssh to launch a Totalview debugger session, assuming that TotalView is installed and licensed and that ssh X forwarding is properly configured:

$ export SLURM_JOBID=150 $ export SLURM_NPROCS=4

$ mpirun

After you finish with this interactive allocation, exit the /bin/bash process in the first terminal; this ends the LSF job.

102 Using LSF