request more than one core for a job. This option, coupled with the external SLURM scheduler, discussed in

LSF reserves the requested number of nodes and executes one instance of the job on the first reserved node, when you request multiple nodes. Use the srun command or the mpirun command with the

Most parallel applications rely on rsh or ssh to "launch" remote tasks. The ssh utility is installed on the HP XC system by default. If you configured the ssh keys to allow unprompted access to other nodes in the HP XC system, the parallel applications can use ssh. See “Enabling Remote Execution with OpenSSH” for more information on ssh.

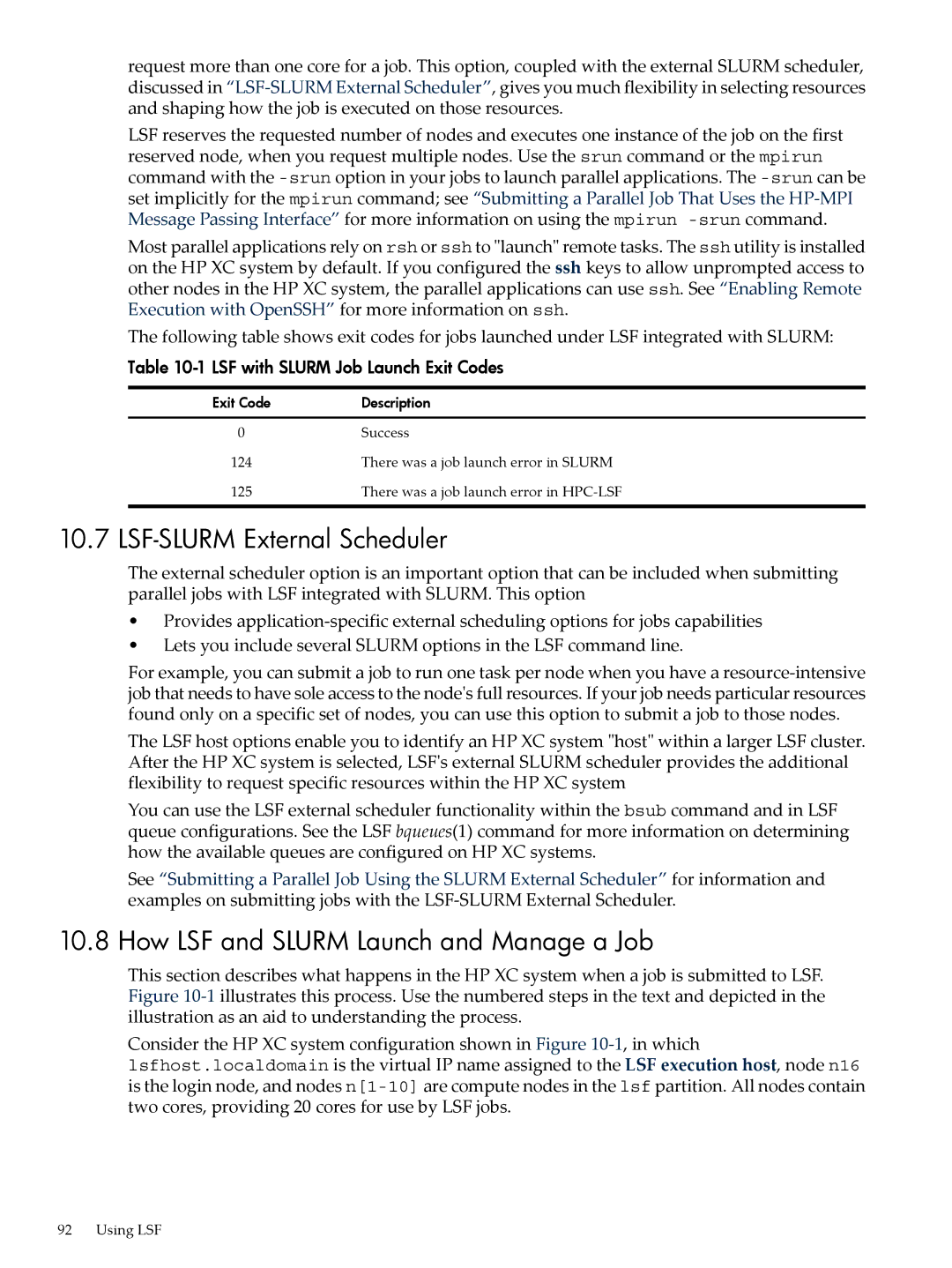

The following table shows exit codes for jobs launched under LSF integrated with SLURM:

Table 10-1 LSF with SLURM Job Launch Exit Codes

Exit Code | Description |

0 | Success |

124 | There was a job launch error in SLURM |

125 | There was a job launch error in |

10.7 LSF-SLURM External Scheduler

The external scheduler option is an important option that can be included when submitting parallel jobs with LSF integrated with SLURM. This option

•Provides

•Lets you include several SLURM options in the LSF command line.

For example, you can submit a job to run one task per node when you have a

The LSF host options enable you to identify an HP XC system "host" within a larger LSF cluster. After the HP XC system is selected, LSF's external SLURM scheduler provides the additional flexibility to request specific resources within the HP XC system

You can use the LSF external scheduler functionality within the bsub command and in LSF queue configurations. See the LSF bqueues(1) command for more information on determining how the available queues are configured on HP XC systems.

See “Submitting a Parallel Job Using the SLURM External Scheduler” for information and examples on submitting jobs with the

10.8 How LSF and SLURM Launch and Manage a Job

This section describes what happens in the HP XC system when a job is submitted to LSF. Figure

Consider the HP XC system configuration shown in Figure

92 Using LSF