Figure 10-1 How LSF and SLURM Launch and Manage a Job

User

1

N16

N166

Login node

$

2

lsfhost.localdomain

LSF Execution Host

job_starter.sh

$srun

| 4 |

| 3 |

|

|

|

|

| |

| SLURM_JOBID=53 |

| ||

| SLURM_NPROCS=4 |

| ||

| N1 | 5 | Compute Node |

|

|

|

| ||

|

| myscript |

| |

|

|

|

| |

$ | hostname |

| $ hostname |

|

| n1 |

| $ srun hostname | srun |

|

| $ mpirun |

| |

|

|

|

| |

6

hostname

n1

7

N2

6

hostname ![]() Compute Node

Compute Node

n2

7

N3

6

hostname ![]() Compute Node

Compute Node

n3

7

N4

6

hostname ![]() Compute Node

Compute Node

n4

7

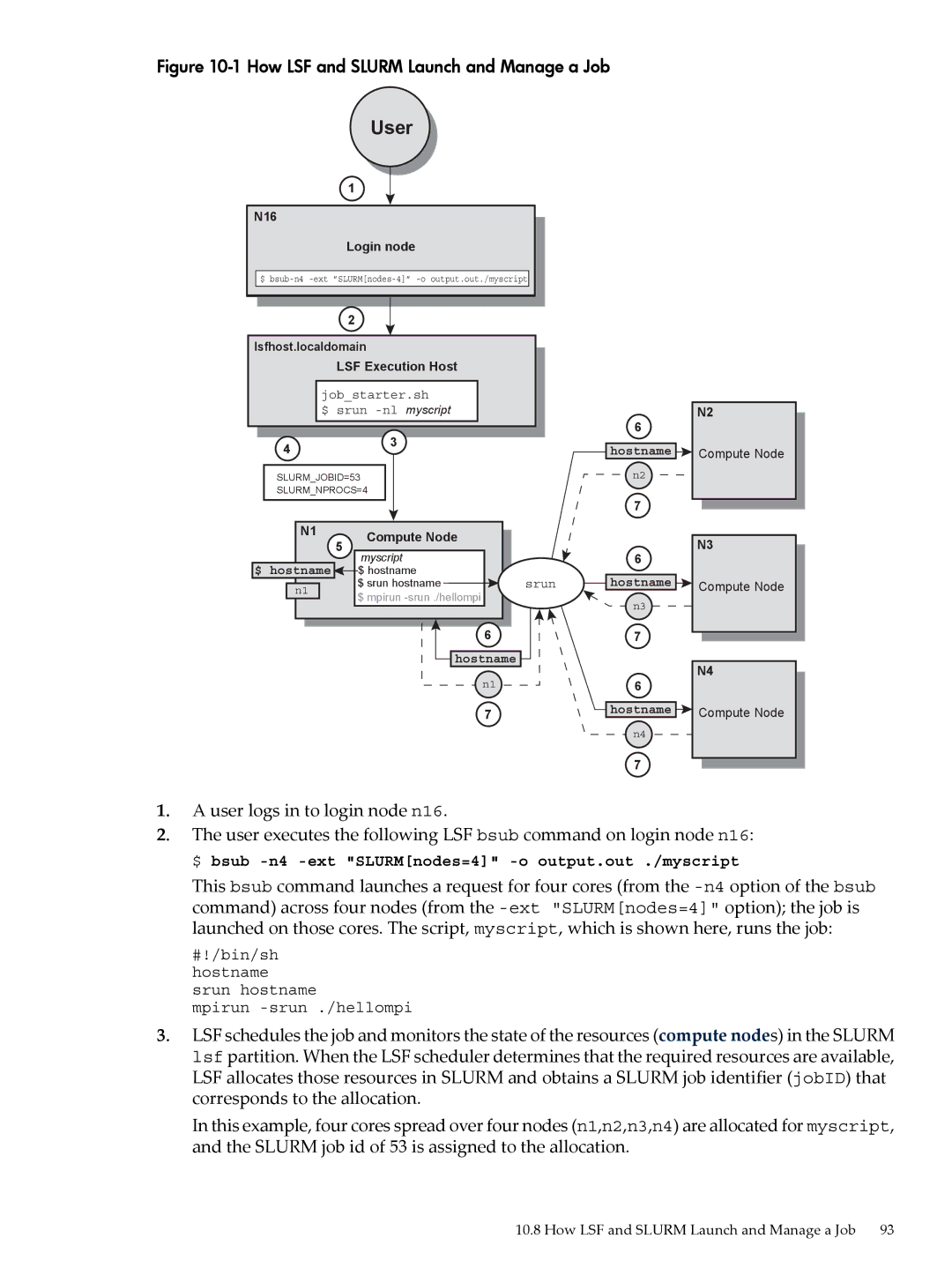

1.A user logs in to login node n16.

2.The user executes the following LSF bsub command on login node n16: $ bsub

This bsub command launches a request for four cores (from the

#!/bin/sh hostname srun hostname

mpirun

3.LSF schedules the job and monitors the state of the resources (compute nodes) in the SLURM lsf partition. When the LSF scheduler determines that the required resources are available, LSF allocates those resources in SLURM and obtains a SLURM job identifier (jobID) that corresponds to the allocation.

In this example, four cores spread over four nodes (n1,n2,n3,n4) are allocated for myscript, and the SLURM job id of 53 is assigned to the allocation.

10.8 How LSF and SLURM Launch and Manage a Job 93